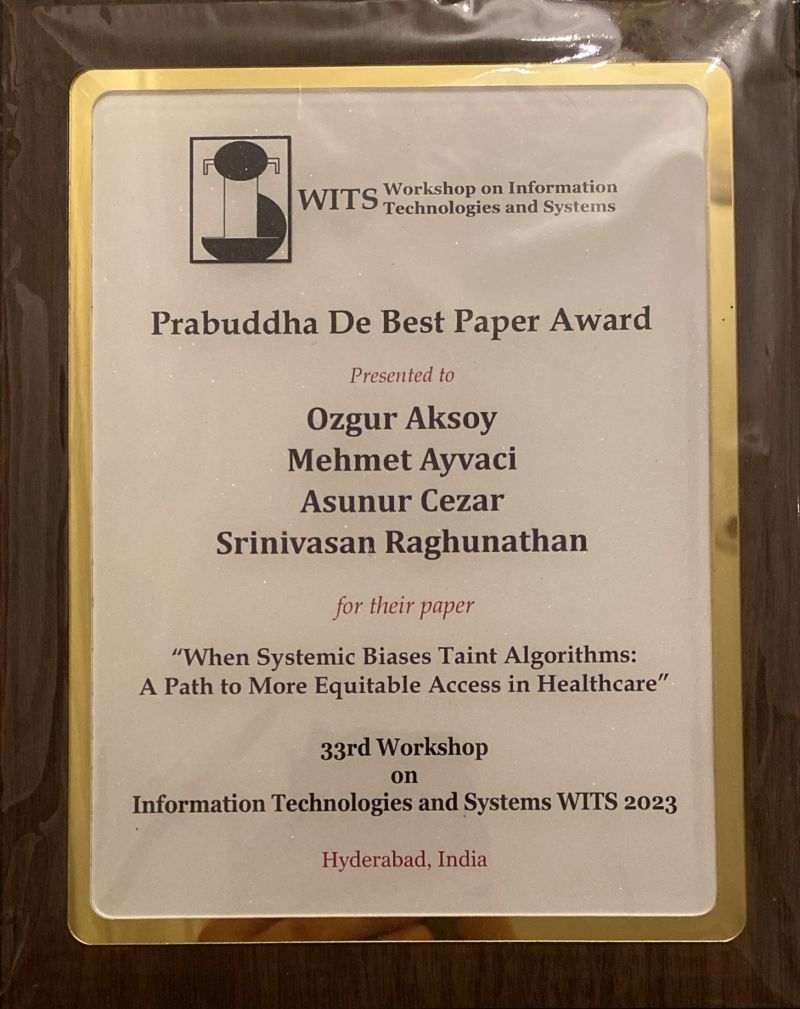

This study titled "When Systemic Biases Taint Algorithms: A Path to More Equitable Access in Healthcare," focuses on investigating the impact of systemic biases on algorithmic decision-making. We explore how societal inequalities, which are reflected in the training data, can affect decisions (i.e., intervention) made through predictions. To reduce algorithmic bias, we have developed an approach that takes social factors into account within a prediction-decision framework. Our research demonstrates that efforts to ensure algorithmic fairness can help mitigate the negative impact of algorithmic bias during the prediction stage. However, even when the prediction outcomes are fair, reducing bias does not always lead to a reduction in disparities in the decision outcomes if the decision-maker has a tight budget or if the effectiveness of the intervention differs between the advantaged and the disadvantaged.

MY POSTS

Our research examines the impact of algorithm-enabled decision support for monitoring sepsis on patient mortality in hospitals. We investigate whether and how this technology can make a difference in saving lives, and if so, when is it impact is stronger or weaker. We have found that algorithm-based monitoring of real-time patient data and the process innovations that come with it can reduce the relative risk of sepsis-related death by 45%. However, our research also shows that the effectiveness of the algorithm is sensitive to the workload and the number of inaccurate alerts experienced by providers. We also found that the mortality benefits of adopting the algorithm-based monitoring technology decrease over time; evaluation of workload and experience with inaccurate alerts over time partly explain this diminishing impact. Our paper is available at https://pubsonline.informs.org/doi/abs/10.1287/msom.2023.1226

Under the prevailing fee-for-service payments (FFS), hospitals receive a fixed payment, while physicians receive separate fees for each treatment or procedure performed for a given diagnosis. Under FFS, incentives of hospitals and physicians are misaligned, leading to large inefficiencies. Bundled payments (BP), an alternative to FFS unifying payments to the hospital and physicians, are expected to encourage care coordination and reduce ever increasing healthcare costs. However, as hospitals differ in their relationships with physicians in influencing care (level of physician integration), the expected effects of bundling in hospital systems with varying level of physician integration remains unclear. There is a lack of both academic and practical understanding of hospitals' and physicians' bundling incentives. Our study builds on and expands the recent Operations Management literature on alternative payment models. We formulate game-theoretic models to study (1) the impact of the level of integration between the hospital and physicians in the uptake of BP, (2) the consequences of bundling with respect to overall care quality and costs/savings across the spectrum of integration levels. We find that (1) hospitals with low to moderate levels of physician integration have more incentives to bundle as compared with hospitals with high physician integration; (2) to engage physicians, hospitals need to gainshare savings with physicians, a mechanism that was not available in traditional FFS-based payment models; (3) when feasible, BP is expected to reduce care intensity, and this reduction in care intensity is expected to result in quality improvement and cost savings in hospital systems with low to moderate level of physician integration; (4) however, when bundling happens in hospital systems with relatively higher level of physician integration, BP may lead to underprovisioning of care and ultimately quality reduction, and (5) in an environment where hospitals are also held accountable for quality, the incentives for bundling will be higher for involved parties, yet quality vulnerabilities due to bundling can be exacerbated. Our findings have important managerial implications for policy-makers and payers such as CMS, and hospitals: (1) policy makers and payers should be aware of and account for potential negative effects of current BP design on a subset of hospital systems, including a possible quality reduction, and (2) in deciding whether to enroll in BP, hospitals should consider their level of physician integration and possible implications for quality. Based on our findings, we expect that a widespread use of BP may trigger further market concentration via hospital mergers or service-line closures. Our paper is available at https://pubsonline.informs.org/doi/full/10.1287/msom.2023.1187

The College of Healthcare Operations Management of Production and Operations Management Society (POMS) selected our research paper titled "To Catch A Killer: A Data-Driven Personalized and Compliance-Aware Sepsis Alert System" as the winner of 2021 best paper award. My co-authors in this paper are Zahra Mobini and Ozalp Ozer from the Naveen Jindal School of Management, UT Dallas. In our research, We design a data-driven alert system to improve the process of care for a prevalent, deadly, and costly condition--sepsis. Our system customizes alert decisions for a given patient's health status and caregivers' compliance behavior. Back testing our proposed system based on actual hospital operations demonstrate its potential to improve sepsis care; our system can catch more sepsis cases (22%) and trigger alerts, on average, 39 hours earlier (ranges 29-53) than is possible with rule-based alert systems. Managers, providers, and developers of alert systems in hospitals can use our proposed design in adjusting typical rule-based alert systems to personalize alerts and incorporate caregiver behavior in the inpatient setting. Our paper is available at SSRN: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3805931

Throughout 2020, there was no story in the forefront of healthcare analytics as prevalent and all-consuming in the world as the Coronavirus pandemic. As nations and healthcare networks tried to respond to such a crisis, one of the biggest unknowns continued to be what group of patients will face severe illness and death. We used a detailed Covid-19 dataset created by the Mexican government to predict disease severity and mortality for a given patient at the time of hospital admission. The dataset contained detailed clinical and demographic information on 1,048,575 patients across 38 variables, such as age, gender, pneumonia onset, pre-existing conditions, lab test results, and more. Using R, we developed decision trees and logistic regression models focused on predicting patients’ COVID-19 disease progression and death. We determined that 55 was the critical age threshold for severe disease progression. Our models achieved an AUC of 89.78% and a misclassification rate of 17%. Asides from age, hypertension and endocrine system conditions were significant predictors of severe disease. Our predictive models can help healthcare organizations identify patient cohorts most at risk of severe disease and death at the time of hospital admission. They can also help in public efforts such as vaccination to target those who are most vulnerable.

By Reinaldo Noriega, Omar Quispe, Blan Yamek, Sandy Tang

One of my recent papers coauthored with Eren Ahsen (Illniois-Urbana Champaign) and Srinivasan Raghunathan (UT Dallas) was selected for Best Paper Award by the Information Systems Research (ISR) Journal. Our paper is titled "When Algorithmic Predictions Use Human-Generated Data: A Bias-Aware Classification Algorithm for Breast Cancer Diagnosis." As the title also suggests, we develop a debiasing mechanism to address the algorithmic bias in the context of developing a decision support tool for breast cancer diagnosis. Here is the link for the paper at ISR website https://lnkd.in/ey4UBPn or at SSRN https://lnkd.in/e3P4gbd

Innovative use of technology in responding to the COVID-19 crisis is unprecedented. Tech in the U.S. is putting its resources, such as machine learning and cloud capabilities, to study patient blood samples. The goal is to innovate.

As of 2019, the opioid epidemic had become the leading cause of death nationwide, costing the U.S. healthcare system $60.4 billion. We used insurance claims data to predict an insurance member's probability of being on long term opioid therapy (LTOT) at the time of initial opioid prescription. Using 52 features from a 4-year longitudinal data belonging to 13,761 unique patients, we developed random forest, logistic regression, and decision tree models. The random forest model has an AUC of .84, as compared with the logistic regression (.80) and the decision tree (.76) models. Variables important for LTOT prediction included the specialty of prescribing doctor, the total cost to the member, the opioid's relative potency, drug class, chronic pain diagnosis, and prior prescriptions. Our model can help insurance companies intervene early by requiring prior authorization before an opioid prescription is filled. The predictions, once incorporated into operations, can help in the fight against the US opioid crises and, in the process, save lives and money for all stakeholders.

By TOBY GLAZER, TANIYA DHAR, DIVYA PILLAY, JERRIL JACOB

Youth e-cigarette use has dramatically increased in recent years and has become a significant public health issue. How can schools most efficiently utilize their resources and interventions to prevent high-risk students from using e-cigarettes? We used the 2018 National Youth Tobacco Survey administered by the Center for Disease Control and Prevention) as our dataset to predict which students were at high risk for using e-cigarettes. Using our models, we characterized a generic “high-risk student” profile. We found the most significant variables in predicting e-cigarette use were curiosity about e-cigarettes, susceptibility to peer pressure, family history of using e-cigarettes, previous tobacco use, and opinion that e-cigarettes are not harmful. Our prediction models can be used by school districts in screening students and identifying students at a higher risk to use e-cigarettes. Based on predictions from these models, school officials can better target interventions.

By JERRY LI, MOLLY ANN THOMAS, SHREEJA CHEKURI, SILPA NIMMAGADDA